How Cloudflare Engineered High-Performance Infrastructure for Large Language Models

Introduction

Large language models (LLMs) demand immense computational power and network bandwidth. Cloudflare recently unveiled a new infrastructure design tailored to run these models across its global network. The key innovation? Separating input processing from output generation onto distinct, optimized systems. This how-to guide breaks down the steps Cloudflare took to build this high-performance infrastructure, based on their announced approach. Whether you're planning a similar system or just curious about the architecture, these steps provide a blueprint.

What You Need

- Understanding of LLM workloads: Recognize that input and output stages have different performance requirements.

- Global network of data centers: Distributed points of presence (PoPs) to reduce latency.

- Specialized compute hardware: GPUs for training/inference, separate CPUs or accelerators for text preprocessing and postprocessing.

- Software for load balancing and request routing: To direct input and output streams to appropriate systems.

- Monitoring tools: To track performance and optimize resource allocation.

- Budget for costly hardware: LLM infrastructure is expensive; plan for scaling.

Step-by-Step Guide

Step 1: Analyze Input and Output Requirements

LLMs handle voluminous amounts of text both incoming (prompts) and outgoing (generations). Input processing often requires parsing, tokenization, and context management. Output generation is compute-intensive, demanding high memory bandwidth and parallel processing. Recognize that these stages have different bottlenecks—input may be I/O bound, while output is compute bound. Document the expected traffic patterns and peak loads for each stage.

Step 2: Separate Input Processing from Output Generation

In traditional designs, a single system handles both input and output. Cloudflare’s innovation is to decouple these functions. Assign dedicated servers or clusters for input preprocessing (e.g., tokenization, prompt caching) and separate systems for output generation (e.g., model inference). This prevents resource contention and allows independent scaling. Use a load balancer to direct incoming requests to the input processing tier.

Step 3: Optimize Hardware for Each Stage

Input processing can often run on CPU-based servers with fast storage and high network throughput. Output generation requires GPU accelerators or custom AI chips. Cloudflare likely uses heterogeneous hardware tailored to each task. For the output stage, invest in high-bandwidth memory and interconnects to reduce latency. For input, prioritize fast SSDs and ample RAM for caching.

Step 4: Deploy Across a Global Network

Leverage Cloudflare’s distributed edge network to bring processing closer to users. Deploy both input and output processing units at multiple PoPs. This minimizes round-trip time for input ingestion and output delivery. Use anycast routing to direct users to the nearest PoP. Ensure synchronization between input and output stages if they reside in different locations.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Step 5: Implement Efficient Request Routing

Create a routing layer that maps incoming requests to the appropriate input processing node. After input is processed, the intermediate result (e.g., a tokenized prompt) should be forwarded to an output generation node. Cloudflare may use internal load balancing with affinity rules to keep related requests on the same infrastructure. Consider using message queues (e.g., Apache Kafka) to decouple the stages.

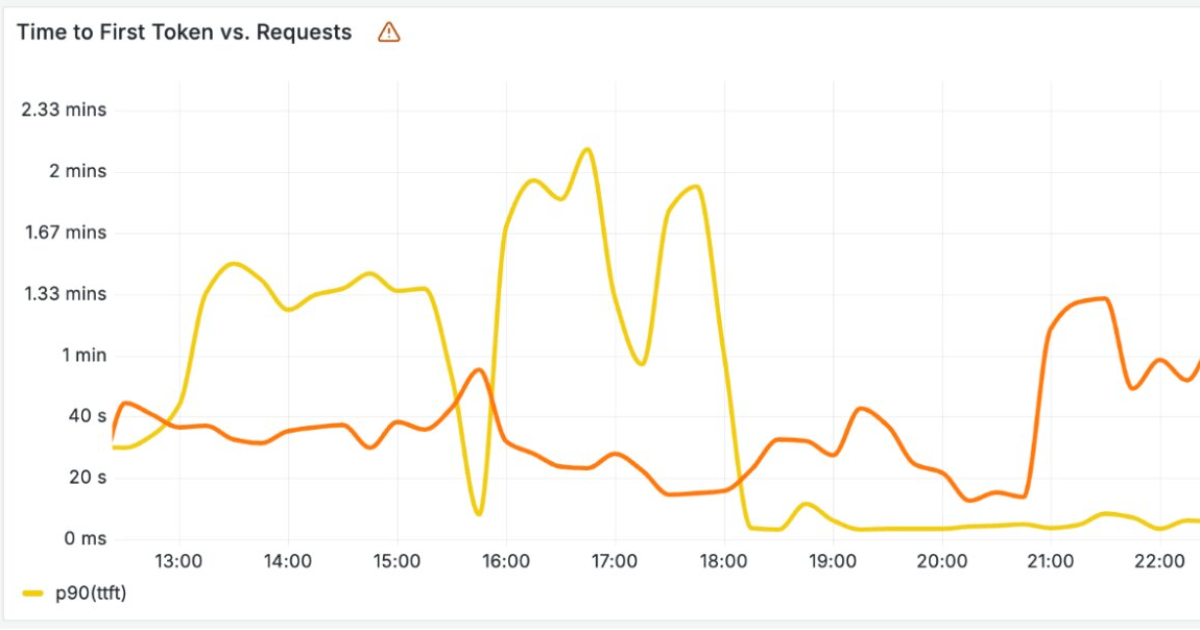

Step 6: Monitor and Tune Performance

Set up telemetry for both stages. Track metrics like input preprocessing time, inference time, cache hit rates, and network latency. Cloudflare’s global network provides wide visibility—use that data to right-size hardware and adjust routing policies. Continuously tune the balance between input and output capacity to avoid bottlenecks.

Step 7: Iterate and Scale

As LLMs evolve, requirements change. Cloudflare’s architecture is designed to scale horizontally by adding more nodes to either stage. Periodically review hardware utilization and upgrade when necessary. Also, consider adopting newer technologies like autoscaling based on real-time demand. Cloudflare’s approach is a platform; adapt it to your own infrastructure.

Tips for Success

- Start small: Pilot the separation of input/output on a subset of your traffic before a full rollout.

- Use caching aggressively: Cache frequent input tokens or completed generations to reduce compute loads.

- Balance cost vs. performance: Output generation hardware is expensive—consider spot instances or reserved capacity.

- Leverage CDN features: Cloudflare’s existing CDN can accelerate the delivery of generated text.

- Keep latency low: Place input and output nodes in the same region whenever possible to avoid cross-region delays.

- Stay modular: Ensure that input and output systems communicate via well-defined APIs for easy upgrades.